Supercharge Your Native Ollama.

Ollama Gateway: Supercharge your native Ollama with enterprise-grade API authentication, request auditing, rate limiting, and virtual model management—your secure, private AI gateway.

Security and Auditing by Design.

Bearer Token Auth

Create multiple API keys for different users and applications, each with its own fine-grained permissions.

Clickhouse Auditing

Detailed auditing for every request and response, stored in high-performance Clickhouse storage for compliance.

Team Management

A built-in Role-Based Access Control (RBAC) system for managing your team's access to AI models.

Model Keep-alive

Periodically pings underlying models to ensure they stay loaded in memory for instant response times.

Default Model Support

Automatically redirect requests to a default virtual model if no model is specified in the API call.

Native Passthrough

Seamless support for native Ollama features including MCP tools, embedding, images, and stream mode.

Intelligent

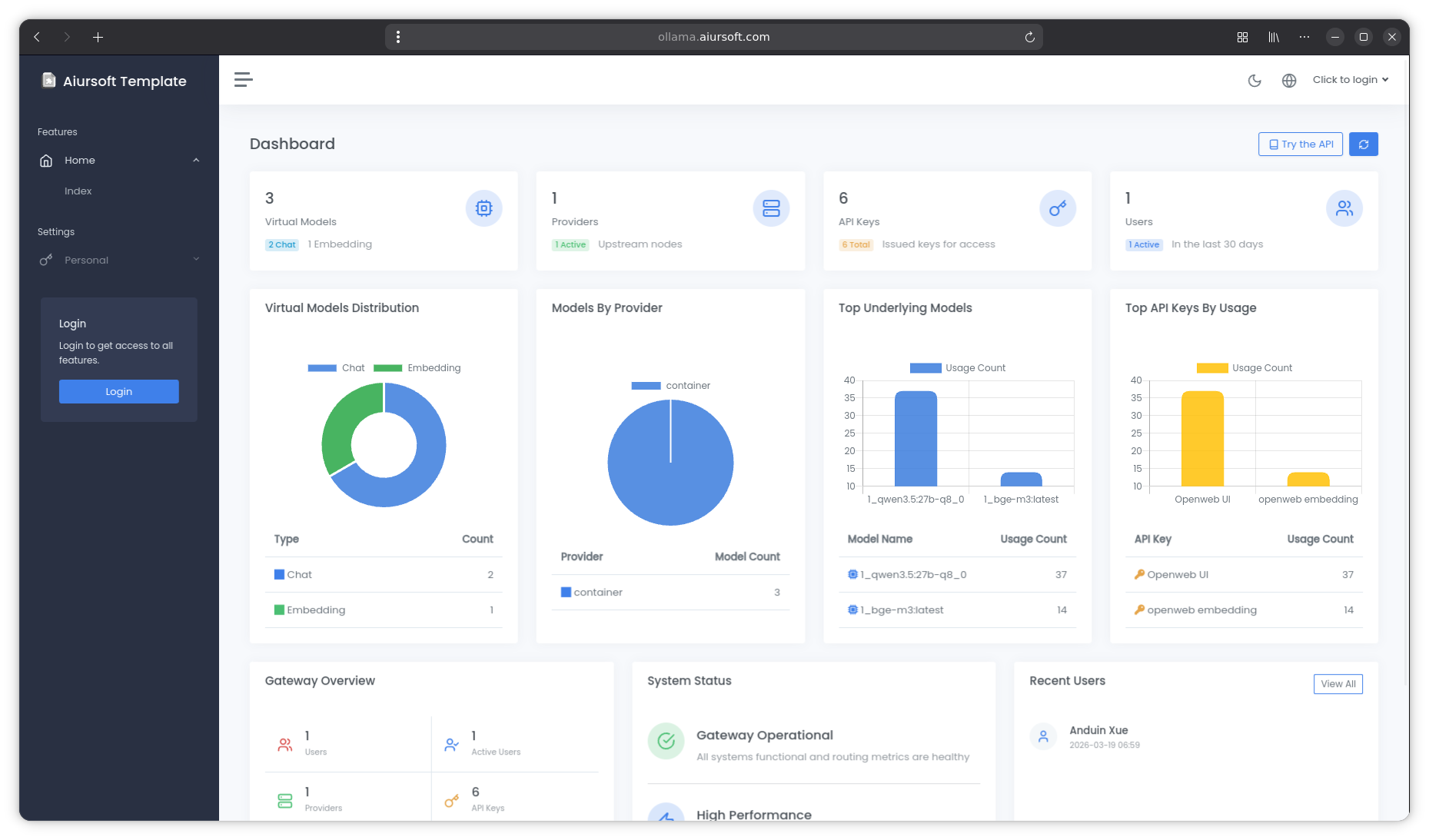

Virtual Models

Create multiple aliases for your models with custom system prompts and parameter overrides.

Chat Models

Create virtual chat models with persistent system prompts.

Embedding Models

Dedicated management for embedding models for RAG applications.

Parameter Overrides

Override temperature, top_k, and other Ollama options per virtual model.

Perfect for Agent Deployment

OllamaGateway has been officially tested and is fully compatible with popular ecosystem tools including Open-WebUI, Opencode, and Roocode. Its robust API translation makes it the ideal choice for deploying autonomous AI agents.

Recommended Models

-

qwen3.5:27b-q8_0 -

qwen3.5:35b-a3b-q4_K_M

Mix Any Client with Any Backend

OllamaGateway supports two inbound API formats (OpenAI & Ollama) and two backend provider types — in any combination. Your clients and physical models don't have to speak the same language.

OpenAI Client

→ OpenAI Backend

/v1/chat/completions

→ OpenAI-compatible provider

Ollama Client

→ OpenAI Backend

/api/chat

→ OpenAI-compatible provider

OpenAI Client

→ Ollama Backend

/v1/chat/completions

→ Native Ollama

Ollama Client

→ Ollama Backend

/api/chat

→ Native Ollama

| Parameter | ①OpenAI → OpenAI | ②Ollama → OpenAI | ③OpenAI → Ollama | ④Ollama → Ollama |

|---|---|---|---|---|

| Temperature | Full support | Full support | Full support | Full support |

| Top P | Full support | Full support | Full support | Full support |

| Top K | Not supported | DB override discarded | DB override | Full support |

| Num Ctx | Not supported | DB override discarded | DB override | Full support |

| Thinking (think) | Not supported | DB override discarded | DB override | Full support |

When a virtual model has parameters configured in the database, those values always override what the client sends.

Why choose OllamaGateway?

Native Ollama is great for personal use, but it lacks the enterprise features required for team collaboration and production deployment. OllamaGateway fills those gaps without changing your workflow.

| Feature Comparison | Native Ollama | OllamaGateway |

|---|---|---|

| Model Hosting & Inference | ||

| Multimodal | ||

| MCP | ||

| Function call | ||

| Streaming | ||

| OpenAI API Translation | ||

| API Authentication (Bearer) | ||

| Multiple API Keys Management | ||

| Request & Response Auditing | ||

| API Rate Limiting | ||

| Virtual Model Overrides | ||

| Multi-backend Support | ||

| Load Balancing | ||

| Tiered Fallback | ||

| Default Model Support | ||

| Model Keep-alive (Ping) | ||

| Admin Management GUI | ||

| Chat/Embedding Segregation |